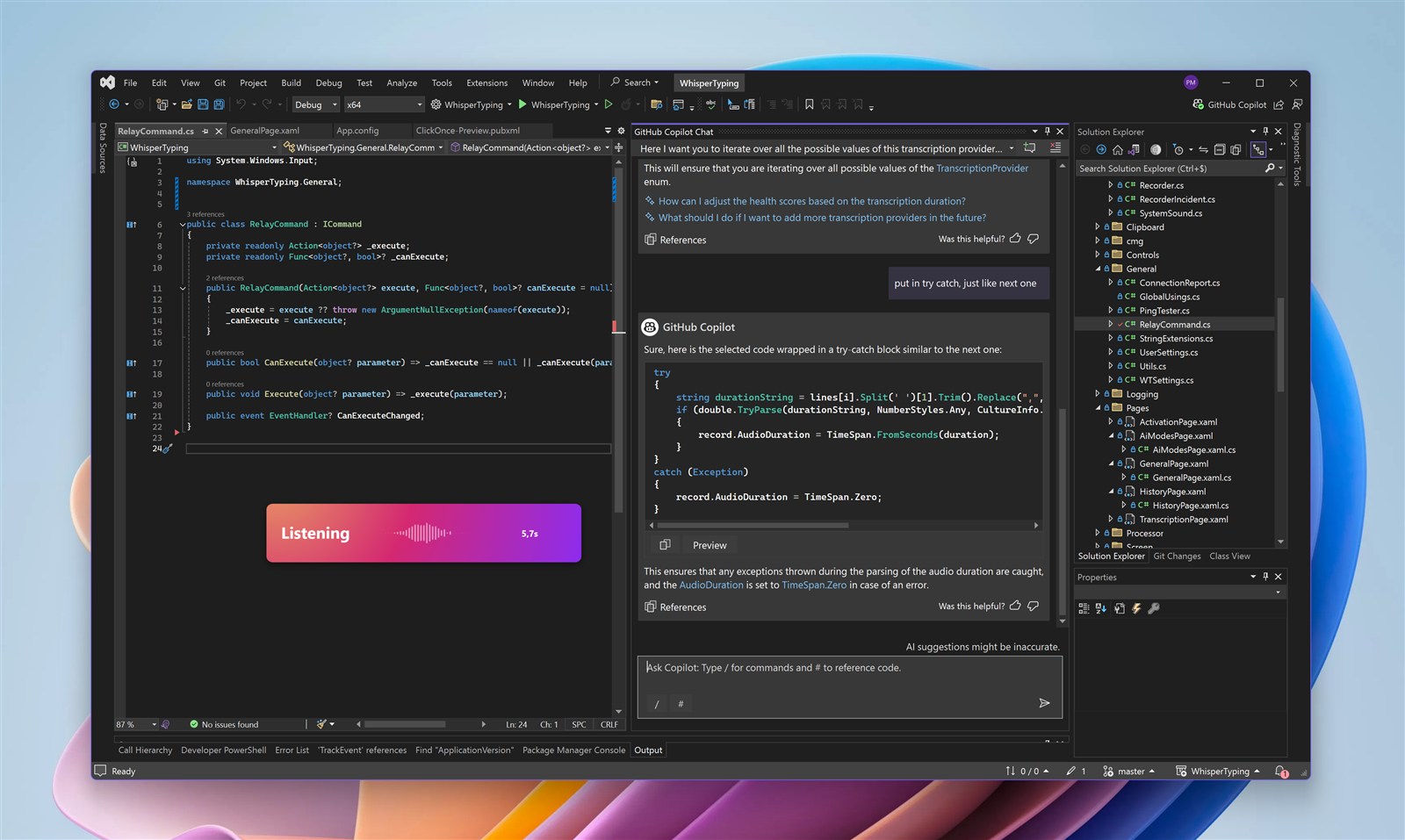

GitHub Copilot is the AI pair programmer millions of developers use daily. Copilot Chat lets you ask questions, explain code, and request changes. And with Copilot CLI now generally available, you can run a full coding agent in your terminal too. With WhisperTyping, you speak to Copilot naturally, whether in your IDE or the command line. Ready to vibe code?

GitHub Copilot Chat

Copilot Chat is built into VS Code, Visual Studio, and JetBrains IDEs. You can ask it to explain code, fix bugs, write tests, and refactor. Its Agent Mode can even explore your codebase and make multi-file changes autonomously. Voice input makes this conversation flow naturally.

Copilot CLI

GitHub also offers Copilot CLI, a terminal-based coding agent available to all paid Copilot subscribers. It can plan tasks, edit files across your codebase, run tests, and iterate autonomously. WhisperTyping works perfectly with it: dictate your prompt, double-tap to send, and let Copilot CLI do the work.

Always Ready When You Are

Here's what makes WhisperTyping essential for Copilot users: your hotkey is always available. Whether you're reviewing code, testing your app, or reading documentation - hit your hotkey and start speaking. Your next prompt is ready to go before you even switch windows.

See a bug while testing? Hit the hotkey: "The login form isn't validating email addresses correctly." By the time you're back in your editor, your thought is captured and ready to paste into Copilot Chat.

Works in Every IDE

WhisperTyping works with Copilot Chat in all supported environments:

- VS Code: The most popular Copilot environment

- Visual Studio: Full support for .NET developers

- JetBrains IDEs: IntelliJ, PyCharm, WebStorm, and more

- Terminal: Copilot CLI for command-line workflows

Double-Tap to Send

The feature developers love most: double-tap your hotkey to automatically press Enter. Dictate your prompt and send it to Copilot in one motion.

Single tap starts recording. Double-tap stops, transcribes, and sends. No reaching for the keyboard. No breaking your flow.

Combine this with mouse activation and you can control everything with one hand. Use your middle mouse button or map a side button on mice like the Logitech MX Master to trigger WhisperTyping. Click to start recording, speak your prompt, double-click to send. Your other hand stays free for coffee.

Blazing Fast Transcription

Users love WhisperTyping for its snappiness. On a decent internet connection, the median transcription time is just 370 milliseconds. You stop speaking and your text appears almost instantly.

That responsiveness matters when you're iterating with Copilot. There's no awkward pause between finishing your thought and seeing it on screen. It feels like the tool is keeping up with you, not the other way around.

Custom Vocabulary

Common frameworks and libraries are recognized out of the box. Add words that are unique to your world:

- Your project name and internal codenames

- Names of colleagues and collaborators

- Company-specific terms, acronyms, and jargon

- Niche libraries or tools that speech recognition might not know

Screen-Aware Transcription

WhisperTyping reads your screen using OCR. When you're looking at code, it sees the same function names, error messages, and variables you do - and uses them to transcribe accurately.

Why Voice for Copilot?

Copilot Chat works best with clear, contextual requests. Voice makes it effortless:

- Explain code while looking at it: "What does this regex pattern do?"

- Request changes naturally: "Add null checking to this function"

- Ask for tests: "Write unit tests for the authentication module"

- Debug issues: "Why might this be returning undefined?"

Tip: Tell Copilot You Use Voice

Add a note to your project's .github/copilot-instructions.md file that your input comes via voice transcription. Copilot reads this file automatically and will interpret your intent instead of stumbling over transcription errors. Something like:

"User input comes via voice dictation. Expect possible transcription errors like homophones, missing punctuation, or misheard words. Interpret intent rather than taking input literally."

Once Copilot knows to expect voice input, you can stop worrying about transcription accuracy. Just speak naturally, be descriptive, and double-tap to send. No need to review your transcription before sending.

Frequently Asked Questions

Can I use speech recognition with GitHub Copilot?

Yes. WhisperTyping adds speech recognition to GitHub Copilot on Windows. It works with Copilot Chat in VS Code, Visual Studio, and JetBrains IDEs, as well as the new Copilot CLI terminal agent. Your speech is transcribed and typed wherever your cursor is.

How do I dictate to Copilot Chat on Windows?

Install WhisperTyping, set a hotkey or enable mouse activation, then click into Copilot Chat and press your hotkey to start speaking. WhisperTyping transcribes in about 370 milliseconds and types directly into the chat. Double-tap to send your prompt instantly.

Does GitHub Copilot have built-in voice input?

GitHub Copilot does not have built-in voice input. You need a separate dictation tool like WhisperTyping. The advantage is that WhisperTyping works everywhere on your system: Copilot Chat, terminal, email, documentation, any text field.

Can I use voice typing with GitHub Copilot CLI?

Yes. Copilot CLI runs in your terminal, and WhisperTyping types your spoken prompts directly into the terminal input. Combined with double-tap to send and mouse activation, you can run Copilot CLI entirely with one hand on your mouse.